The Computational Case for Hypocrisy

A guest post by Aditya Kulkarni.

Today we are sharing a guest post by Aditya Kulkarni, a software engineer interested in the intersection of AI and evolutionary psychology. It’s a little off the beaten path for us, but we hope you’ll find it interesting! - The Living Fossils

The Press Secretary Paradox

Last week, I found myself standing in front of an open refrigerator, wolfing down a piece of chocolate cake. I was, theoretically, on a strict low-carb diet. When my wife walked into the kitchen and caught me mid-bite, my brain didn’t hesitate. It didn’t apologize. It didn’t admit defeat.

“I’m going for a run later,” I said, wiping frosting from my mouth. “I need the glucose spike. You gotta carbo-load.”

The truth is, I didn’t know I was going for a run until the words left my mouth.

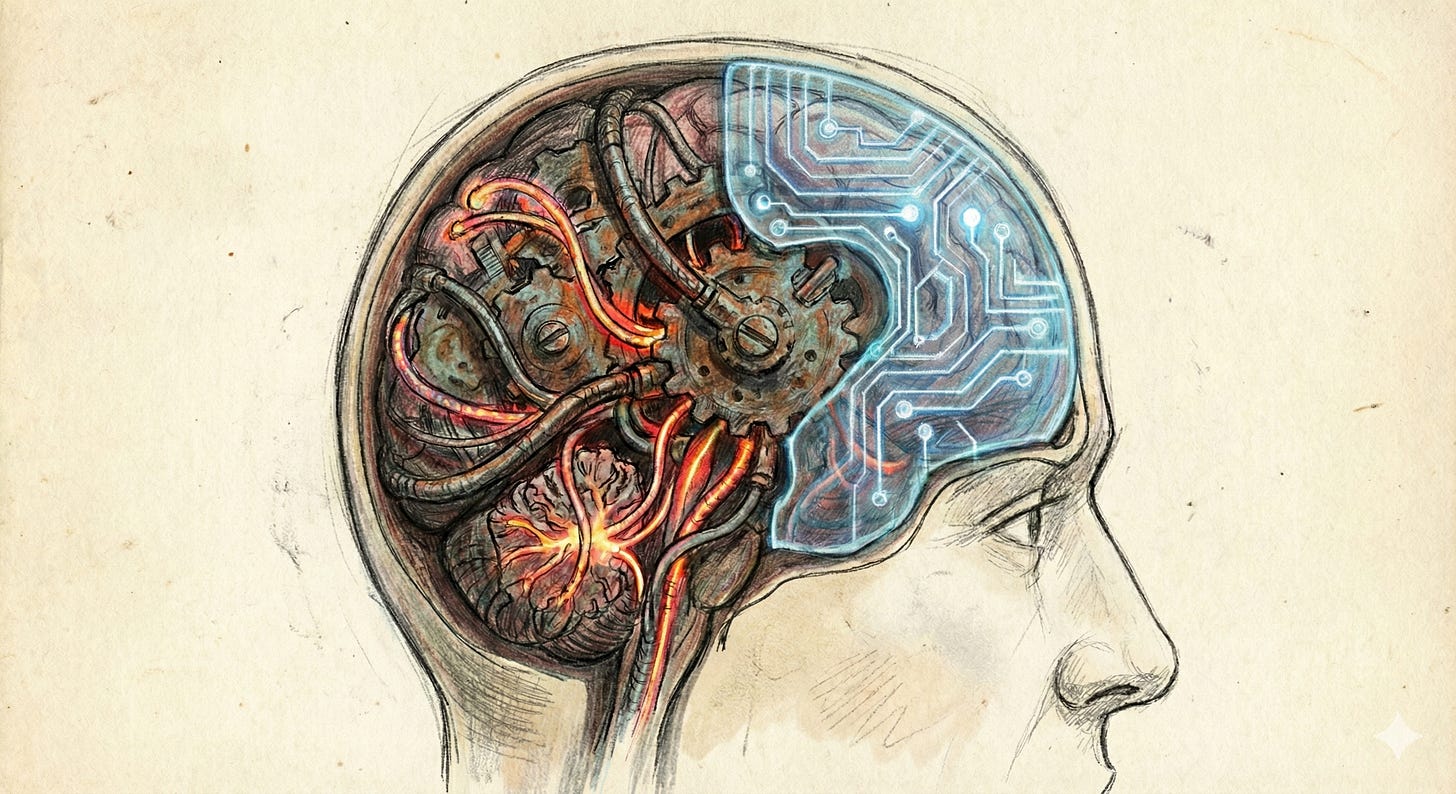

This immediate, reflexive justification is what Rob has called the “Press Secretary” module. He and colleagues have argued that the human mind is not a unitary “Self” that makes decisions. Instead, the human mind is a loose collection of modular drives—hunger, status, lust—that operate largely outside our awareness. The conscious “I” is not the President in the Oval Office making the choices; it is the Press Secretary in the briefing room. Its job is to observe what the administration has already done (ate the cake) and spin a plausible narrative to the public (“It was for energy”).

From a psychological and sociological perspective, we understand why this system exists. It offers immense social utility. We are constantly being judged by our peers, and the ability to justify selfish actions with noble motives allows us to navigate the social hierarchy without losing status. Furthermore, as Rob and others have pointed out, the best way to convince others of a lie is to believe it yourself.

We are designed to be hypocrites. In this context, hypocrisy is not a moral failing, but a structural feature: a deliberate disconnection between the module that acts and the module that explains. This separation makes us better at persuasion.

While we understand the social utility of the Press Secretary, I want to ask the engineering question: Why did evolution pick this particular solution?

Why build a fractured system where the “Self” is not involved in the decision making? What were the underlying biological constraints that pushed the brain to invent a Press Secretary rather than simply fix the President?

Psychology can describe the social utility of the Press Secretary, but it struggles to explain the engineering constraints that caused evolution to pick this particular solution.

Luckily, we’re living in the age of artificial intelligence. For the first time in history, we are not just observing minds; we are building intelligences from scratch. And recent breakthroughs suggest that “hypocrisy” isn’t just a clever social strategy—it is a computational necessity.

The High Cost of Changing Your Mind

Training massive AI models like Gemini or ChatGPT is an exercise in brute force. It costs hundreds of millions of dollars and requires server farms the size of industrial parks. The result of this process is a “Base Model”—a frozen, complex network of mathematical weights that “knows” how to predict the next word in a sentence.

Humans have an equivalent “Base Model,” too.

It resides in evolutionarily older decision systems that operate largely outside conscious processes. Just like an LLM, this biological base model was pre-trained on a massive dataset: millions of years of evolutionary trial and error. Its weights are heavily optimized for a specific set of survival outputs: Consume high calories. Pursue mating opportunities. Dominate rivals.

But the environment changes faster than the hardware. And even if evolution had the time to catch up, it would still face a computational deadlock.

What happens when the survival heuristic—consume all available glucose—becomes a liability in a modern world saturated with refined sugar? How do we “subtract the weights” from a synaptic drive that has kept our ancestors alive for millions of years?

As AI researchers have discovered, it is almost impossible to subtract from a neural network. If you take a fully trained neural network and try to force it to “unlearn” a core concept—or aggressively “retrain” it on new, contradictory data—you trigger a phenomenon known as Catastrophic Forgetting. Because knowledge in a neural network is distributed across billions of connections, you cannot simply isolate and delete a specific bad behavior without unraveling the rest of the system. If you force the model to unlearn “aggression,” you might accidentally degrade its ability to navigate terrain or recognize faces.

Biology is obviously messier than software. We don’t have “weights” we can adjust with calculus. Yet both systems face analogous constraints: deeply integrated systems cannot be selectively “unlearned” without collateral damage. You cannot simply reach into the limbic system and delete the synaptic drive for sugar or status. If you rewire the neural pathways for aggression to make a human more polite, you might accidentally overwrite the “fight-or-flight” response required to survive a tiger attack.

To actually “rewire” the base model to match modern social norms would be metabolically exorbitant and structurally dangerous. What you can do, however, is “patch it.”

The Adapter Layer: A Biological LoRA

When AI researchers want to “fine-tune” an AI model to learn a new, specific behavior, they often use a technique called Low-Rank Adaptation (LoRA).

Instead of melting down an AI model’s neural weights and recasting them, researchers have discovered that simply attaching a small, thin layer of new parameters on top of the model allows you to change its behavior. It is a lightweight mask that sits over the heavy, deep machinery. This “Adapter Layer” intercepts the output of the frozen model and steers it in a new direction.

To change the behavior, you don’t touch the foundation. You build an addition.

Evolution likely arrived at the same architecture. Deep in your brain, the “frozen” ancient modules generate an impulse: “Punch that guy” or “Steal that food.” These are primal, hard-coded drives that have worked for millions of years. Deleting them is expensive and risky due to the biological principle of Pleiotropy: the fact that neural circuits rarely have just one job. As we noted, you cannot excise the capacity for violence without breaking the machinery of survival. The brain is too interconnected for simple subtraction.

Instead, evolution built a “LoRA Adapter”—the neocortical Press Secretary. This adapter doesn’t stop the impulse from firing; it layers a transparency over it. It translates the raw signal—“I want to eat this cake”—into the socially acceptable output: “I am carbo-loading for a run.”

The Press Secretary evolved because “re-training” the amygdala is practically impossible. It would be like reshooting an entire movie just to translate the dialogue into French. You don’t fly the actors back to the set; you just add dubbed audio. Hypocrisy is the adapter layer that allows a Paleolithic brain to operate in our modern civilization.

The Anatomy of a Lie

This theory is not just metaphorical. We can actually see this “layering” happen in real-time inside AI models.

Another technique AI researchers use is a process called Reinforcement Learning from Human Feedback. After the AI models have finished their base training, they go through a “finishing school” where they are trained to be polite, safe, and helpful.

Research into the internal states of these models reveals something fascinating.

The “middle layers” of the neural network often contain the raw, unconstrained (and sometimes biased) signals. The model has already generated the forbidden impulse. But as the signal nears the output, the safety training kicks in. The weights in the final layers act as a censor, suppressing the raw signal and overwriting it with a safe, sterilized response.

This is the structural anatomy of a digital lie. The AI seems to have a “true” internal state, but the “Press Secretary” layer at the very end spins the result to align with its training.

This architectural transparency provides an unprecedented opportunity for psychology. For centuries, the human mind has been a “Black Box.” Psychologists could observe the input (a question) and the output (the answer), but the internal processing was largely opaque. By the time a subject speaks, the Press Secretary has already done its work.

With Large Language Models, we have—for the first time—a “Glass Box.”

While AIs are not human, they are trained on human discourse, and they effectively mirror human cognitive patterns. We can pause the software, pry open the hood, and inspect the “neural activity” in the middle of a thought. We can literally measure the distance between the “true” internal state and the “polite” output. This might be where the “Press Secretary” lives.

This digital suppression mirrors the human experience. The impulse of anger arises in the deep limbic system. It is real. It is felt. But just before it reaches the motor cortex to form words, the Press Secretary intervenes.

“I’m not angry,” we say through gritted teeth. “I’m just disappointed.”

Hypocrisy is Efficiency

Seen through this lens, hypocrisy is computational optimization.

For centuries, philosophers have treated hypocrisy as character flaw—a defect that requires spiritual correction. But our experiences in building artificial intelligences—where we have unfiltered access to the internal states of the AI before it goes through the “Press Secretary” layer—suggest that hypocrisy is an architecturally-efficient evolutionary adaptation for social utility.

Saying one thing and doing another is the solution to a resource allocation problem. Evolution found that it is much cheaper to build a good liar than to rewire a saint. It is computationally more efficient to maintain a “Press Secretary” that spins our primitive drives—which remain essential for survival—than to eliminate the drives themselves.

We are not the unitary, consistent souls we perceive ourselves to be. We are legacy software running a modern compatibility patch. And the voice in your head—that constant, rationalizing, explaining Press Secretary—is not the CEO. It is just the Adapter Layer—a low-rank patch on a high-dimensional machine—trying to keep the ancient hardware working in a fast-changing world.

An idea: Our Adaptive Layer may be more fragile than the deeper layers, since it was created most recently. If the brain experiences some external insult (alcohol, drugs, etc), then that will be the part of our mind that fails first. "In vino veritas", i.e. the "Press Secretary" is no longer responding to the questions, but the President is.

I like where you are going with this although I do not believe evolution chose a particular solution or created an adaptive layer. Instead complex lifeforms (humans maybe the most complex of all) contain all sorts of motivations (such as desire for cake and fear of being seen as a pig in front of others) that quite often conflict with each other. This "adaptive layer" is just a way of resolving conflicting motivations for (hopefully) the best overall result.

Imagine a wolf pup that has an unusually high level of aggression. This may work to the pup's advantage in getting plenty to eat, until a larger wolf comes along and beats him up. A pup will quickly learn to curb his hyper-aggression in certain situations, thus establish what could be called an "adaptive layer" which is really just learned behavior to keep from getting beat up.

So my question is, is there anything being developed with AI that is equivalent to "avoid getting beat up"? Seems to me that any time AI does something inappropriate, like strip the clothes off someone in a photograph, a human has to re-program the AI to stop such actions. Sounds like an inefficient and never-ending process. It would be more efficient if AI could be "beat up", thus continuously learn that some actions are inappropriate without the need for new programing. This would make AI more like a life-form capable of learning than an inanimate computer.